n8n Zoom Transcript Analysis Workflow (and Download)

Chat transcripts are often packed with valuable information, much of which can be missed during a conversation. The quick mention of items that deserve more time than they were given, statements that are more powerful or clearly understood on paper rather than spoken, and serious statements masked by humor happen all the time - and the ability to extract the good stuff from them can lead to solving problems that may otherwise go overlooked:

https://github.com/Foundry81/n8n-zoom-transcript-analysis

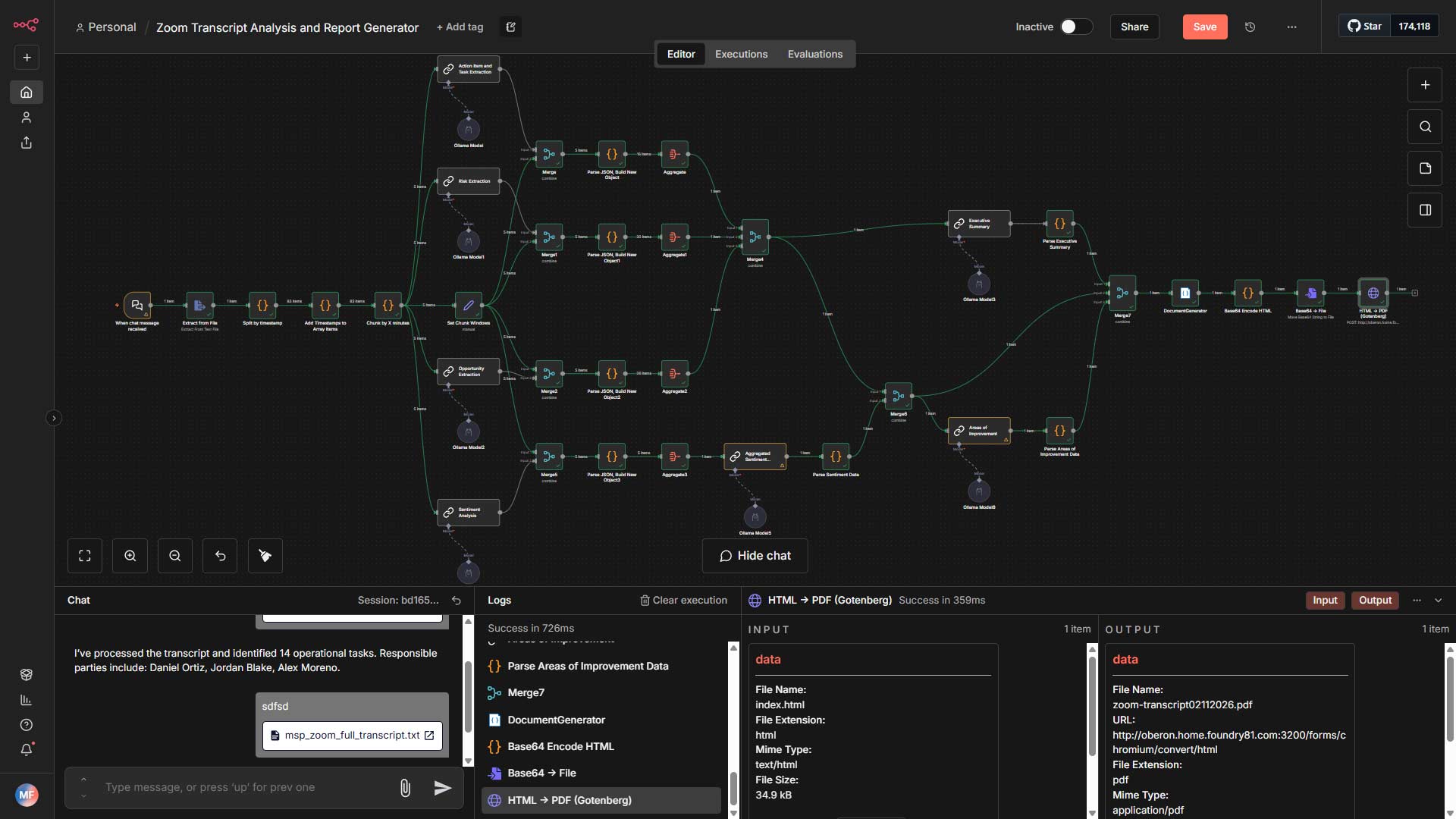

This n8n workflow automates the analysis of client meeting transcripts for Managed Service Providers (MSPs) - and can be adapted for other professional service organizations. It leverages LLMs (I used Ollama/Gemma3:12 while building it) to extract actionable insights, operational risks, business opportunities, and sentiment analysis, ultimately generating an executive-ready report in HTML and PDF formats.

The Basics

I started the workflow with a chat-based trigger since it provides an option to upload a file - and, at some point, I’ll update it to incoroporate a chat message into the LLM prompts for added context.

Let’s say you’re an account manager at an MSP and you’re prepping a QBR - the ability to provide that information to the LLM can help it weigh its analysis and output in the direction of account management. Maybe you’re a service director and have slightly different needs - great, pop them into the chat along with the transcript and you’re going to get what you want.

That’s for later though - back to the now; once the workflow recieves the transcript, it chunks it into 1-minute segments (change this to 5min or so for real-world use,) and runs each chunk through several prompts. The output from each prompt gets parsed and consolidated into a single JSON object that gets applied to an HTML template. Once the template has been created, it’s passed off to Gotenberg and returned as a PDF file ready to be emailed, pushed to another service, or simply downloaded.

Self Hosted or Not, Your Call

I built the workflow with a self-hosted instances of n8n, Ollama, and Gotenberg to keep my transcripts local. While doing so provides a high level of control over your data, you can use it on a hosted version of n8n, swap out the LLMs with ones provided by OpenAI, Google, or other providers, and there are plenty of online services that’ll convert HTML to a PDF in place of Gotenberg. Set it up however you want; just be sure to treat your data with care.

Modify it For Your Needs

This workflow was designed with MSPs in mind, but can easily be modified to work for other types of service-based organizations by adjusting the prompts to fit your needs and goals. If a prompt doesn’t fit your needs at all, just remove it from the workflow and adjust the HTML template so the section isn’t included in the output. That’s it!

Have an idea how this can be improved or be made more flexible? Shoot me a message and I’ll work it into the next batch of updates!

The Self-Hosting Responsibility Spectrum

The Self-Hosting Responsibility Spectrum

Homelabs, self-hosting, and doing whatever the fsck you want.

Homelabs, self-hosting, and doing whatever the fsck you want.

Starting a Homelab the Right Way - With the Why

Starting a Homelab the Right Way - With the Why